I’ve watched more than one team celebrate a content report like it was a victory parade.

Spoiler: The pipeline didn’t get the memo.

Traffic up. Time on page up. Engagement up. “Our SEO is really working.”

Meanwhile, the pipeline is flat. Sales is still explaining the same thing on every call. Support is still answering the same questions. Product adoption looks like a sad little plateau. And leadership is doing that quiet thing where they stop asking for updates and start updating their mental org chart.

So… what are we measuring, exactly?

Because a piece of content can get a ton of attention and still do nothing useful. It can perform as media while failing completely as infrastructure. It can win the algorithm and lose the business.

That gap is what I mean by content resonance. (Intent is related; that’s another post.)

Engagement is a reaction. Resonance is a change.

Here’s the simplest definition I’ve found that doesn’t collapse into marketing soup:

Engagement measures whether someone interacted with your content.

Resonance measures whether your content stuck, enough to change what someone thinks, says, or does next.

Engagement is the tap on the glass. Resonance is when someone rearranges their furniture.

And the reason this matters (especially if you’re trying to build a marketing function from scratch, or prove ROI to a skeptical exec team) is that engagement is easy to manufacture. Resonance is earned.

A like can be a reflex. A share can be performative. A comment can be someone fighting in your mentions because your headline made them mad.

Resonance is harder to fake because it shows up downstream, where the consequences live.

You can feel the difference in the wild:

- Engagement looks like: “Great post!”

- Resonance looks like: “I forwarded this to my team.” / “We changed our approach.” / “We finally understood what you meant.” / “I tried it and it worked (or didn’t).”

One of those is dopamine. The other is signal.

Why engagement metrics fail (and why it keeps happening)

Marketing teams don’t measure the wrong thing because they’re stupid. They measure the wrong thing because the wrong thing is available, clean, and immediately graphable.

Engagement metrics are comforting. They’re also incomplete.

A few reasons they keep betraying us:

First: passive consumption isn’t value. A view is not a win. A scroll is not trust. A click is sometimes just curiosity followed by disappointment.

Second: post-click behavior is the truth serum. If you promise clarity and deliver fog, people will leave fast. If you actually help, they’ll linger, return, share with context, or go deeper. That’s where the quality signal lives: what happens after the click, not the click itself.

Third: platform incentives are weird, and they shift. I’m not going to pretend we can reverse engineer every ranking system. But we can observe outcomes: content that people actually use tends to travel farther over time than content that’s optimized to be glanced at and forgotten. Humans reward usefulness. Platforms notice humans being satisfied.

And fourth (the one nobody wants to say out loud): a lot of content is built to look like work, not to create impact. It exists because “we should publish something,” not because it solves a real problem. It’s busywork wearing a blazer.

The uncomfortable part: you have to define “meaningful action”

Resonance only makes sense if you know what “success” looks like for your org.

That sounds obvious. It’s not. Most teams skip it.

Meaningful action is not universal. A seed-stage founder trying to get their first ten design partners should not measure content the same way as a Series C company with an enterprise sales team and a mature product.

So the goal isn’t to adopt some generic definition of action. The goal is to choose your definition (deliberately) and then build measurement around it.

At different companies, “meaningful action” might mean:

A PLG company cares about signups, activation, time-to-value, feature adoption, expansion triggers.

A sales-led company cares about qualified meetings, deal influence, cycle compression, and “this link showed up in the thread.”

A devtool might care about quickstarts completed, integrations shipped, docs depth, fewer repetitive support tickets, or the number of times a tutorial becomes “the link we send everyone.”

A category creator might care about repeat mentions, inbound that references a specific POV, and whether your framing starts showing up in other people’s words.

The key is that you can’t measure resonance if you haven’t decided what change you’re trying to create.

If you don’t pick a definition, your metrics will pick one for you. And they will pick the easiest thing to count.

The Content Resonance Framework

Resonance isn’t one moment. It’s a sequence.

Most content doesn’t fail because it’s “bad.” It fails because it breaks at a specific point in the sequence, and then teams try to fix the wrong thing. They throw more distribution at a recognition problem. They polish copy for an action problem. They add CTAs to a connection problem. It’s chaos with nicer charts.

Here’s the model I use to diagnose it:

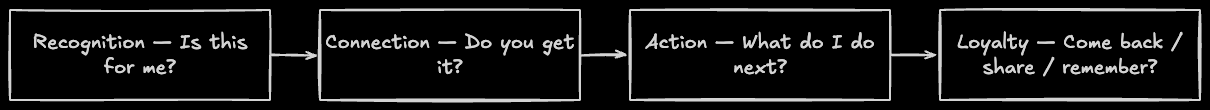

Recognition → Connection → Action → Loyalty

Not because stages are magical. Because they’re practical.

Recognition: “Is this for me?”

This is the first few seconds. The reader is not deciding whether to learn from you. They’re deciding whether to stay.

Recognition fails when the promise is vague, the headline is generic, the intro meanders, or the content doesn’t name the real problem fast enough.

If your bounce is high and your scroll initiation is low, don’t “promote harder.” Fix the promise.

Connection: “Do you actually get it?”

If recognition gets you a chance, connection earns trust.

Connection is where the reader thinks: “yes, this is my reality,” or “finally, someone said it clearly,” or “this person understands the tradeoffs.”

Connection fails when content is technically correct but emotionally vacant. Or when it’s polished but generic. Or when it tries to sound smart instead of being useful.

If people start the piece but don’t finish it (time on page and scroll depth don’t move together), you didn’t lose them because they’re lazy. You lost them because you didn’t deliver.

Action: “What do I do next?”

Action is where content stops being a nice brand artifact and starts being a growth asset.

Action can be obvious (sign up, book a call) or subtle (forward it internally, start a trial, open docs, run the quickstart, implement the pattern).

Action fails when the next step is mismatched or too high friction. When you ask for a demo from someone who’s still trying to understand what the product does. When the content teaches, but never bridges to application. When the CTA exists but feels like a different conversation.

If your content gets read but doesn’t move anyone forward, you likely have an action problem, not a writing problem.

Loyalty: “Do I come back? Do I remember you?”

This is the compounding stage. It’s the part that turns content into gravity.

Loyalty looks like return visits, direct traffic to a specific post, repeat sharing, sales teams using a link without being asked, “I’ve been following your writing” inbound, and prospects referencing your framing months later like it’s common sense.

Loyalty fails when your best content isn’t maintained, isn’t findable, or doesn’t connect into a coherent web of ideas. If your site is a pile, people don’t return. If it’s a map, they do.

Measuring resonance with the stack you already have

If you have web analytics, product analytics, and HubSpot (or similar), you’re not missing tools.

You’re missing a model.

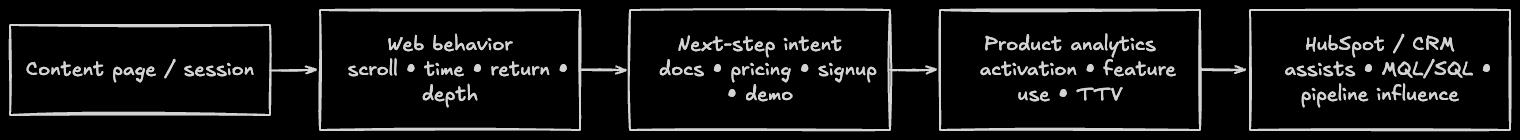

The easiest way to measure resonance is to stop treating content as a pageview machine and start treating it as part of a behavior chain.

Here’s what I look for, in human terms:

If recognition is working, people don’t bounce immediately. They scroll. They settle in.

If connection is working, they don’t just skim. They spend time, they reach the bottom, they click deeper, they come back later. You start seeing the “expensive” forms of response: replies with specificity, forwards, bookmarks, sales mentions.

If action is working, something measurable happens next. Not necessarily “buy now,” but a next step that matches intent: signup, docs visit, trial start, activation event, demo request, newsletter subscription, whatever you defined as meaningful for your business.

If loyalty is working, the piece becomes a reference. Return visits climb. Direct traffic grows. People share it without you prompting. You see it show up in conversations you weren’t in.

The biggest practical shift is this: tie content sessions to downstream behavior.

If someone reads a guide and then triggers a product event within the same session (or within a reasonable lookback window), that’s resonance. If content-assisted conversions show up in HubSpot, that’s resonance. If sales notes repeatedly mention a specific URL, that’s resonance.

No, attribution won’t be perfect. Nothing is. But “imperfect signal” is still better than “pretty chart of vibes.”

A composite example

Let’s say you publish two pieces in the same month.

One is a high-level “future of the industry” post. It gets shared widely. Big traffic. Lots of applause. Everyone says it’s smart. It makes your brand look sophisticated in a way that plays nicely on LinkedIn.

The other is a deeply practical post: a real implementation guide with the boring parts included. It gets a fraction of the traffic. Almost no public engagement. But people who read it do something afterward: they start a trial, complete the quickstart, or forward it to an engineer with “this is the clearest version.”

In a reach-and-engagement worldview, you’d crown the first post and quietly move on.

In a resonance worldview, the second post is a business asset. It reduces friction. It accelerates outcomes. It earns trust. It becomes infrastructure.

That’s the game.

And yes, you want both over time. But if you’re early-stage, resource-constrained, or under pressure to prove impact, you should bias hard toward the work that moves people, not the work that performs.

Why this matters even more for technical audiences

Developers have a hypersensitive fluff detector. They’ve been trained by decades of marketing to assume they’re being lied to until proven otherwise.

They don’t hate marketing because they’re grumpy. They hate marketing because “two-minute setup” often means “three-hour yak shave,” and “developer-first” brands keep shipping docs that feel like they were assembled from three Slack messages and a prayer.

So resonance for technical audiences is brutally practical. It’s specificity. It’s honesty. It’s respect for time. I’ve written before about why developer content is a conversation, not a broadcast; resonance is what you get when that conversation actually lands.

If you can write content that resonates with developers, it usually resonates with executives too, because clarity scales.

If it resonates with executives but not developers, you probably wrote a brand poem.

What resonance-first content strategy actually requires

This isn’t “write better intros.” It’s not “add more CTAs.” It’s an operating model.

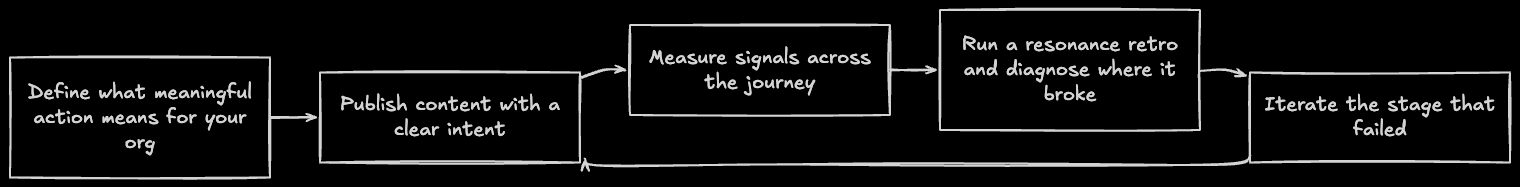

Resonance-first content requires three things:

You have to define meaningful action.

You have to instrument the journey from content → behavior → outcome.

And you have to run a feedback loop that causes iteration, not just reporting.

The simplest version is a monthly “resonance retro.” Pick a handful of pieces and ask, honestly: where did this break? Did it fail at recognition, connection, action, or loyalty? What would we change if we were optimizing for impact, not applause?

Because if your content reporting doesn’t change what you do next, it’s not strategy. It’s performance theater.

The quiet hiring manager subtext

If you’re a marketing leader or founder reading this and thinking, “cool, but we don’t have anything like that,” you’re not alone.

Most teams don’t need more content. They need content that behaves like infrastructure: clear, maintained, measurable, and built around real outcomes.

That’s the work. That’s what makes content compound. And that’s the difference between “we post a lot” and “marketing actually moves the business.”

Resonance isn’t a vibe.

It’s a system.